A lesson from history – why workers today might sabotage emerging workplace technology.

The tsunami of artificial intelligence – AI – that has swamped many facets of peoples’ lives, communications, IT-based activities, leisure, and employment is relentless, and the flooding will likely penetrate even further into the uplands of society and the economy. The force of AI is irresistible: it has commercial momentum and there are few ways for a person to ‘opt out’ of AI. People need to brace against the force of the AI flood, knowing they are going to be drenched, perhaps even submerged.

There has been a steady and increasing downpour of media and academic reporting about how AI is making some people feel uneasy in the workplace. People are reportedly concerned that AI will threaten their employment. People are worried about the quality of AI-derived workplace information. People fret that AI will take over decision-making without a person being part of the process. Some people appreciate that AI, as a large language model (LLM), can be perverted by adversaries or vested interests pumping huge amounts of distorting ‘facts’ into the LLM pool thus giving adulterated answers which people will unwittingly choose to rely on, to their detriment.

With the uncertainties that stem from AI, it seems that AI can provoke an insider threat response in the workplace. We know from Shaw and Sellers (2015) widely accepted model Critical Pathway to Insider Riskthat one of the four steps to becoming an insider threat is personal, professional, and financial stressors. AI can be a workplace stressor.

In October 2024, Gallup research showed that only 6% of employees feel very comfortable using AI in their roles, with about 16% very or somewhat comfortable using AI. However, about 32% of employees say they are very uncomfortable using AI in their roles.

In February 2025, the Pew Research Center reported that 52% of workers say they feel worried about how AI may be used in the workplace. Some 36% say they feel hopeful about AI, 33% feel overwhelmed, and 29% feel excited. Workers’ views vary by age, education and income levels.

In March 2025, a survey by the AI start-up Writer recorded that among malicious insiders who admitted to sabotaging their company’s AI initiative, about 30% said that “AI diminishes their value or creativity” while 28% said they didn’t want AI to take their job, and that the AI they were using at work was low-quality and had too many security issues.

Writer’s report goes to the heart of the matter of AI in the workplace – AI can be a trigger for insider threat activity.

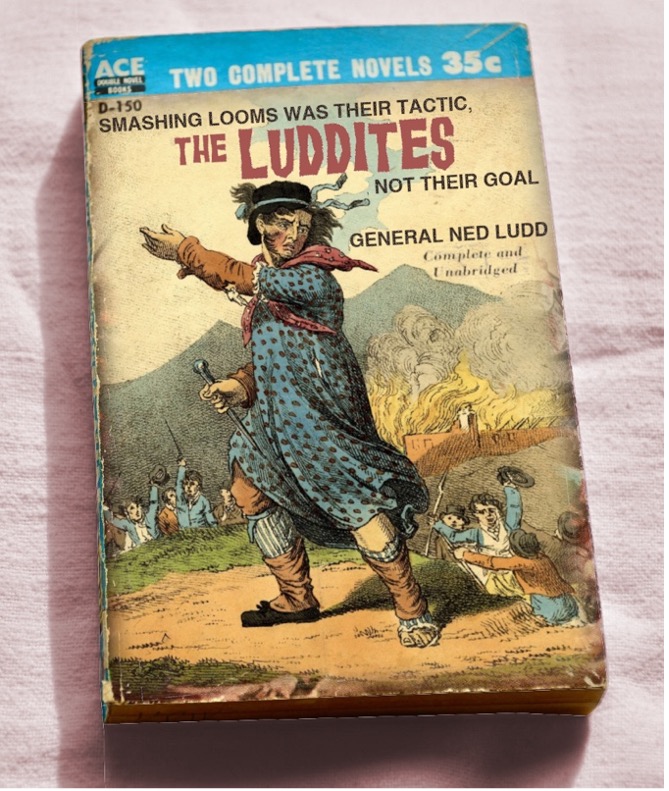

However, this type of human response to technology – that being sabotage – is not new. In Britain circa 1811–1817, the Luddite movement emerged during the harsh economic climate of the Napoleonic Wars, which saw a rise in difficult working conditions in the new textile factories born from the Industrial Revolution. The Luddite disturbances started in circumstances of economic and social upheaval.

Today, the term ‘Luddite’ indicates a person with some sort of ineptitude to deal with modern technology, but back in the 1800s, the Luddites were neither opposed to technology nor inept at using it. Many Luddites were highly skilled machine operators in the textile industry, worried about being displaced by increasingly efficient machines. The Luddites, according to Kevin Binfield, editor of the 2004 Writings of the Luddites, “were totally fine with machines”, confining their attacks to manufacturers who used machines in what the Luddites called “a fraudulent and deceitful manner” to get around standard labour practices. “They just wanted machines that made high-quality goods, and they wanted these machines to be run by workers who had gone through an apprenticeship and got paid decent wages. Those were their only concerns.”

So, today we find ourselves 210 years chronologically advanced, yet facing the same technology perception problems and fears suffered by the Luddites who, when they smashed machines, became insider threats.

Binfield’s comment, that the workers just wanted machines that made high-quality goods and that these machines to be run by workers who had gone through an apprenticeship and got paid decent wages, aligns with contemporary workers’ reported fears about AI displacing and devaluing the skills, education, employment certainty, and creativity that people bring to the workplace.

Further, workers in most Western societies today are experiencing similar challenging circumstances (arguably with worse in prospect) in terms of political ennui, ineffective governance, economic stress, social fracture, uncertainty, and military conflict that were ingredients for the Luddites to spawn and act.

All the uncertainties are present to conjure for workers that AI be seen by workers as a significant change, as a threat.

To mitigate the insider threat with respect to AI, what might employers do?

- Communicate – clear and plentiful communication from leaders to employees about use of AI in the workplace is the foundation to cultivating positive views about AI and trust in the technology.

- Employee’s views – ensure there is an established and safe way for employees to easily communicate their views, concerns, suggestions including anonymously, about AI being implementation in the workplace.

- Change management – introducing AI is a significant organisational change process. Utilise the organisation’s change management frameworks and experience to embed AI and support a smooth and inclusive transition.

- Transparency – if AI is to result in workforce changes and employee separations then leaders need to be clear and transparent about this and act sensitively and ethically to the people involved.

- Insider Threat Program – have one, as this provides the mechanism to identify disgruntlement and aberrant behaviour in the workplace which may stem from AI implementation.

- Training and upskilling – provide training and upskilling opportunities to help employees adapt to new technology and see AI as an enabler rather than a threat.

- Leadership modelling – leaders should model openness to AI and be seen actively engaging with the technology to build trust and acceptance.

In closing, AI is here to stay and will become a more pervasive and powerful force in the workplace. As surveys show, people will react to AI in different ways: some embrace AI, some are unsure, and some are fearful and opposed to AI. This opposed group is likely to spawn insider threats who may target AI or cause other harms in the workplace.

In this context, you might label today’s AI-driven insider threats who sabotage AI capabilities not as Luddite but as LuddAIte!